Analytics in the Cloud

•

3 likes•1,048 views

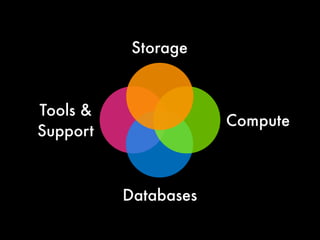

Elastic storage and compute services provide a firm foundation on which to build systems to drive value from data. This presentation discuss how to run analytics pipelines on the AWS Cloud, from data storage with S3 and DynamoDB, to high scale computation with Elastic MapReduce and Cluster Compute instances on EC2.

Report

Share

Report

Share

Recommended

Recommended

More Related Content

What's hot

What's hot (20)

Javascript & SQL within database management system

Javascript & SQL within database management system

Webinar: ArangoDB 3.8 Preview - Analytics at Scale

Webinar: ArangoDB 3.8 Preview - Analytics at Scale

Running complex data queries in a distributed system

Running complex data queries in a distributed system

Big Data Analysis in Hydrogen Station using Spark and Azure ML

Big Data Analysis in Hydrogen Station using Spark and Azure ML

Elegant and Scalable Code Querying with Code Property Graphs

Elegant and Scalable Code Querying with Code Property Graphs

Guacamole Fiesta: What do avocados and databases have in common?

Guacamole Fiesta: What do avocados and databases have in common?

Phily JUG : Web Services APIs for Real-time Analytics w/ Storm and DropWizard

Phily JUG : Web Services APIs for Real-time Analytics w/ Storm and DropWizard

Hadoop and Vertica: Data Analytics Platform at Twitter

Hadoop and Vertica: Data Analytics Platform at Twitter

A Tale of Three Apache Spark APIs: RDDs, DataFrames and Datasets by Jules Damji

A Tale of Three Apache Spark APIs: RDDs, DataFrames and Datasets by Jules Damji

EVALUATING CASSANDRA, MONGO DB LIKE NOSQL DATASETS USING HADOOP STREAMING

EVALUATING CASSANDRA, MONGO DB LIKE NOSQL DATASETS USING HADOOP STREAMING

Fast Cars, Big Data How Streaming can help Formula 1

Fast Cars, Big Data How Streaming can help Formula 1

Viewers also liked

Viewers also liked (20)

AWS Summit Auckland 2014 | Moving to the Cloud. What does it Mean to your Bus...

AWS Summit Auckland 2014 | Moving to the Cloud. What does it Mean to your Bus...

Dev ops on aws deep dive on continuous delivery - Toronto

Dev ops on aws deep dive on continuous delivery - Toronto

Accelerating Organizations with Flexible IT - AWS Summit 2012 - NYC

Accelerating Organizations with Flexible IT - AWS Summit 2012 - NYC

Deploy, Manage & Scale Your Apps with Elastic Beanstalk

Deploy, Manage & Scale Your Apps with Elastic Beanstalk

AWS Government, Education, and Nonprofits Symposium London, United Kingdom L...

AWS Government, Education, and Nonprofits Symposium London, United Kingdom L...

(SOV208) Amazon WorkSpaces and Amazon Zocalo | AWS re:Invent 2014

(SOV208) Amazon WorkSpaces and Amazon Zocalo | AWS re:Invent 2014

Amazon Machine Learning: Empowering Developers to Build Smart Applications

Amazon Machine Learning: Empowering Developers to Build Smart Applications

CPN203 Saving with EC2 Spot Instances - AWS re: Invent 2012

CPN203 Saving with EC2 Spot Instances - AWS re: Invent 2012

AWS Webcast - Backup and Archiving in the AWS Cloud

AWS Webcast - Backup and Archiving in the AWS Cloud

AWS Customer Presentation : PBS - AWS Summit 2012 - NYC

AWS Customer Presentation : PBS - AWS Summit 2012 - NYC

(DVO205) Monitoring Evolution: Flying Blind to Flying by Instrument

(DVO205) Monitoring Evolution: Flying Blind to Flying by Instrument

AWS Sydney Summit 2013 - Architecting for High Availability

AWS Sydney Summit 2013 - Architecting for High Availability

Best practices for content delivery using amazon cloud front

Best practices for content delivery using amazon cloud front

Similar to Analytics in the Cloud

Similar to Analytics in the Cloud (20)

Big Data Analytics with AWS and AWS Marketplace Webinar

Big Data Analytics with AWS and AWS Marketplace Webinar

Think Big Data, Think Cloud - AWS Presentation - AWS Cloud Storage for the En...

Think Big Data, Think Cloud - AWS Presentation - AWS Cloud Storage for the En...

Four Problems You Run into When DIY-ing a “Big Data” Analytics System

Four Problems You Run into When DIY-ing a “Big Data” Analytics System

Big Data: Architecture and Performance Considerations in Logical Data Lakes

Big Data: Architecture and Performance Considerations in Logical Data Lakes

Data Con LA 2022 - What's new with MongoDB 6.0 and Atlas

Data Con LA 2022 - What's new with MongoDB 6.0 and Atlas

Building Data Warehouses and Data Lakes in the Cloud - DevDay Austin 2017 Day 2

Building Data Warehouses and Data Lakes in the Cloud - DevDay Austin 2017 Day 2

More from Amazon Web Services

More from Amazon Web Services (20)

Come costruire servizi di Forecasting sfruttando algoritmi di ML e deep learn...

Come costruire servizi di Forecasting sfruttando algoritmi di ML e deep learn...

Big Data per le Startup: come creare applicazioni Big Data in modalità Server...

Big Data per le Startup: come creare applicazioni Big Data in modalità Server...

Esegui pod serverless con Amazon EKS e AWS Fargate

Esegui pod serverless con Amazon EKS e AWS Fargate

Come spendere fino al 90% in meno con i container e le istanze spot

Come spendere fino al 90% in meno con i container e le istanze spot

Rendi unica l’offerta della tua startup sul mercato con i servizi Machine Lea...

Rendi unica l’offerta della tua startup sul mercato con i servizi Machine Lea...

OpsWorks Configuration Management: automatizza la gestione e i deployment del...

OpsWorks Configuration Management: automatizza la gestione e i deployment del...

Microsoft Active Directory su AWS per supportare i tuoi Windows Workloads

Microsoft Active Directory su AWS per supportare i tuoi Windows Workloads

Database Oracle e VMware Cloud on AWS i miti da sfatare

Database Oracle e VMware Cloud on AWS i miti da sfatare

Crea la tua prima serverless ledger-based app con QLDB e NodeJS

Crea la tua prima serverless ledger-based app con QLDB e NodeJS

API moderne real-time per applicazioni mobili e web

API moderne real-time per applicazioni mobili e web

Database Oracle e VMware Cloud™ on AWS: i miti da sfatare

Database Oracle e VMware Cloud™ on AWS: i miti da sfatare

AWS_HK_StartupDay_Building Interactive websites while automating for efficien...

AWS_HK_StartupDay_Building Interactive websites while automating for efficien...

Recently uploaded

Recently uploaded (20)

"I see eyes in my soup": How Delivery Hero implemented the safety system for ...

"I see eyes in my soup": How Delivery Hero implemented the safety system for ...

Apidays New York 2024 - The Good, the Bad and the Governed by David O'Neill, ...

Apidays New York 2024 - The Good, the Bad and the Governed by David O'Neill, ...

2024: Domino Containers - The Next Step. News from the Domino Container commu...

2024: Domino Containers - The Next Step. News from the Domino Container commu...

Exploring the Future Potential of AI-Enabled Smartphone Processors

Exploring the Future Potential of AI-Enabled Smartphone Processors

Emergent Methods: Multi-lingual narrative tracking in the news - real-time ex...

Emergent Methods: Multi-lingual narrative tracking in the news - real-time ex...

Boost Fertility New Invention Ups Success Rates.pdf

Boost Fertility New Invention Ups Success Rates.pdf

Cloud Frontiers: A Deep Dive into Serverless Spatial Data and FME

Cloud Frontiers: A Deep Dive into Serverless Spatial Data and FME

Apidays New York 2024 - APIs in 2030: The Risk of Technological Sleepwalk by ...

Apidays New York 2024 - APIs in 2030: The Risk of Technological Sleepwalk by ...

Why Teams call analytics are critical to your entire business

Why Teams call analytics are critical to your entire business

Axa Assurance Maroc - Insurer Innovation Award 2024

Axa Assurance Maroc - Insurer Innovation Award 2024

Apidays New York 2024 - Accelerating FinTech Innovation by Vasa Krishnan, Fin...

Apidays New York 2024 - Accelerating FinTech Innovation by Vasa Krishnan, Fin...

Rising Above_ Dubai Floods and the Fortitude of Dubai International Airport.pdf

Rising Above_ Dubai Floods and the Fortitude of Dubai International Airport.pdf

CNIC Information System with Pakdata Cf In Pakistan

CNIC Information System with Pakdata Cf In Pakistan

Analytics in the Cloud

- 1. Storage Tools & Compute Support Databases

- 2. Compute Storage Tools & Support Databases

- 3. Analytics

- 4. Let’s talk about data

- 8. Data is in flux

- 9. Data is fast moving

- 10. Capturing and managing data is challenging

- 11. Lots of data

- 12. Lots of data, lots of uses

- 13. Lots of data, lots of uses, lots of users

- 14. Lots of data, lots of uses, lots of users, lots of locations

- 15. Cost

- 16. Force multiplier

- 17. Additional value

- 18. Click through Social graph Log files Additional value Audit trails Customer usage Transcoding

- 20. Analytics Data intensive, Tightly scale out coupled

- 21. Analytics Data intensive, Tightly scale out coupled

- 22. Hadoop

- 23. Elastic MapReduce Managed Hadoop

- 25. S3 Input data

- 26. S3 Input data Code Elastic MapReduce

- 27. S3 Input data Code Elastic Name MapReduce node

- 28. S3 Input data Code Elastic Name MapReduce node Elastic cluster

- 29. S3 Input data Code Elastic Name MapReduce node HDFS Elastic cluster

- 30. S3 Input data Code Elastic Name MapReduce node Queries HDFS + BI Via JDBC, Pig, Hive Elastic cluster

- 31. S3 Input data Code Elastic Name Output MapReduce node S3 + SimpleDB Queries HDFS + BI Via JDBC, Pig, Hive Elastic cluster

- 32. S3 Input data Output S3 + SimpleDB

- 47. It’s all just Hadoop

- 49. API driven

- 50. Data movement

- 51. Import/Export

- 52. Large object support

- 53. Multipart upload

- 54. Scale control

- 55. Resize running job flows

- 56. 14 hours Time remaining: 14 hours

- 57. 14 hours Time remaining: 7 hours

- 58. Time remaining: 3 hours

- 59. Balance cost and performance

- 60. Resize based on usage patterns

- 61. Steady state Steady state Batch processing

- 62. Spot

- 63. Integrated with DynamoDB

- 64. Integrate

- 65. Backup and restore

- 66. HiveQL

- 67. Live data in DynamoDB CREATE EXTERNAL TABLE orders_ddb_2012_01 ( order_id string, customer_id string, order_date bigint, total double ) STORED BY 'org.apache.hadoop.hive.dynamodb.DynamoDBStorageHandler ' TBLPROPERTIES ( "dynamodb.table.name" = "Orders-2012-01", "dynamodb.column.mapping" = "order_id:Order ID,customer_id:Customer ID,order_date:Order Date,total:Total" );

- 68. Query DynamoDB SELECT customer_id, sum(total) spend, count(*) order_count FROM orders_ddb_2012_01 WHERE order_date >= unix_timestamp('2012-01-01', 'yyyy- MM-dd') AND order_date < unix_timestamp('2012-01-08', 'yyyy-MM- dd') GROUP BY customer_id ORDER BY spend desc LIMIT 5 ;

- 69. Archived data in S3 CREATE EXTERNAL TABLE orders_s3_export ( order_id string, customer_id string, order_date int, total double ) PARTITIONED BY (year string, month string) ROW FORMAT DELIMITED FIELDS TERMINATED BY 't' LOCATION 's3://elastic-mapreduce/samples/ddb-orders' ;

- 70. Query S3 SELECT year, month, customer_id, sum(total) spend, count(*) order_count FROM orders_s3_export WHERE customer_id = 'c-2cC5fF1bB' AND month >= 6 AND year = 2011 GROUP BY customer_id, year, month ORDER by month desc;

- 71. Export to S3 CREATE EXTERNAL TABLE orders_s3_new_export ( order_id string, customer_id string, order_date int, total double ) PARTITIONED BY (year string, month string) ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' LOCATION 's3://'; INSERT OVERWRITE TABLE orders_s3_new_export PARTITION (year='2012', month='01') SELECT * from orders_ddb_2012_01;

- 72. Perfect match

- 73. Analytics Data intensive, Tightly scale out coupled

- 75. Drug discovery Financial risk Social media & analysis gaming Parallel computation Manufacturing Transcoding & & design rendering Genomics

- 76. CC1 + GPU Cluster compute instances

- 77. CC2

- 78. 16 Intel Xeon cores Placement groups CC2 Non-blocking, fully bisectional 10 gig E network

- 79. 240 TFLOPS 42nd faster supercomputer