OpenNMT

•Download as PPTX, PDF•

3 likes•1,531 views

Brief introduction of OpenNMT: Open Source Neural Machine Translation in Torch.

Report

Share

Report

Share

Recommended

Recommended

https://telecombcn-dl.github.io/2017-dlsl/

Winter School on Deep Learning for Speech and Language. UPC BarcelonaTech ETSETB TelecomBCN.

The aim of this course is to train students in methods of deep learning for speech and language. Recurrent Neural Networks (RNN) will be presented and analyzed in detail to understand the potential of these state of the art tools for time series processing. Engineering tips and scalability issues will be addressed to solve tasks such as machine translation, speech recognition, speech synthesis or question answering. Hands-on sessions will provide development skills so that attendees can become competent in contemporary data analytics tools.Advanced Neural Machine Translation (D4L2 Deep Learning for Speech and Langua...

Advanced Neural Machine Translation (D4L2 Deep Learning for Speech and Langua...Universitat Politècnica de Catalunya

More Related Content

What's hot

What's hot (6)

Similar to OpenNMT

https://telecombcn-dl.github.io/2017-dlsl/

Winter School on Deep Learning for Speech and Language. UPC BarcelonaTech ETSETB TelecomBCN.

The aim of this course is to train students in methods of deep learning for speech and language. Recurrent Neural Networks (RNN) will be presented and analyzed in detail to understand the potential of these state of the art tools for time series processing. Engineering tips and scalability issues will be addressed to solve tasks such as machine translation, speech recognition, speech synthesis or question answering. Hands-on sessions will provide development skills so that attendees can become competent in contemporary data analytics tools.Advanced Neural Machine Translation (D4L2 Deep Learning for Speech and Langua...

Advanced Neural Machine Translation (D4L2 Deep Learning for Speech and Langua...Universitat Politècnica de Catalunya

Similar to OpenNMT (20)

Concurrent Programming OpenMP @ Distributed System Discussion

Concurrent Programming OpenMP @ Distributed System Discussion

Transfer Leaning Using Pytorch synopsis Minor project pptx

Transfer Leaning Using Pytorch synopsis Minor project pptx

Machine Learning on Your Hand - Introduction to Tensorflow Lite Preview

Machine Learning on Your Hand - Introduction to Tensorflow Lite Preview

Advanced Neural Machine Translation (D4L2 Deep Learning for Speech and Langua...

Advanced Neural Machine Translation (D4L2 Deep Learning for Speech and Langua...

What every C++ programmer should know about modern compilers (w/ comments, AC...

What every C++ programmer should know about modern compilers (w/ comments, AC...

Programming Languages & Tools for Higher Performance & Productivity

Programming Languages & Tools for Higher Performance & Productivity

Recently uploaded

Model Call Girl Services in Delhi reach out to us at 🔝 9953056974 🔝✔️✔️

Our agency presents a selection of young, charming call girls available for bookings at Oyo Hotels. Experience high-class escort services at pocket-friendly rates, with our female escorts exuding both beauty and a delightful personality, ready to meet your desires. Whether it's Housewives, College girls, Russian girls, Muslim girls, or any other preference, we offer a diverse range of options to cater to your tastes.

We provide both in-call and out-call services for your convenience. Our in-call location in Delhi ensures cleanliness, hygiene, and 100% safety, while our out-call services offer doorstep delivery for added ease.

We value your time and money, hence we kindly request pic collectors, time-passers, and bargain hunters to refrain from contacting us.

Our services feature various packages at competitive rates:

One shot: ₹2000/in-call, ₹5000/out-call

Two shots with one girl: ₹3500/in-call, ₹6000/out-call

Body to body massage with sex: ₹3000/in-call

Full night for one person: ₹7000/in-call, ₹10000/out-call

Full night for more than 1 person: Contact us at 🔝 9953056974 🔝. for details

Operating 24/7, we serve various locations in Delhi, including Green Park, Lajpat Nagar, Saket, and Hauz Khas near metro stations.

For premium call girl services in Delhi 🔝 9953056974 🔝. Thank you for considering us!CHEAP Call Girls in Saket (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

CHEAP Call Girls in Saket (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE9953056974 Low Rate Call Girls In Saket, Delhi NCR

Ashok Vihar Call Girls in Delhi (–9953330565) Escort Service In Delhi NCR PROVIDE 100% REAL GIRLS ALL ARE GIRLS LOOKING MODELS AND RAM MODELS ALL GIRLS” INDIAN , RUSSIAN ,KASMARI ,PUNJABI HOT GIRLS AND MATURED HOUSE WIFE BOOKING ONLY DECENT GUYS AND GENTLEMAN NO FAKE PERSON FREE HOME SERVICE IN CALL FULL AC ROOM SERVICE IN SOUTH DELHI Ultimate Destination for finding a High Profile Independent Escorts in Delhi.Gurgaon.Noida..!.Like You Feel 100% Real Girl Friend Experience. We are High Class Delhi Escort Agency offering quality services with discretion. We only offer services to gentlemen people. We have lots of girls working with us like students, Russian, models, house wife, and much More We Provide Short Time and Full Night Service Call ☎☎+91–9953330565 ❤꧂ • In Call and Out Call Service in Delhi NCR • 3* 5* 7* Hotels Service in Delhi NCR • 24 Hours Available in Delhi NCR • Indian, Russian, Punjabi, Kashmiri Escorts • Real Models, College Girls, House Wife, Also Available • Short Time and Full Time Service Available • Hygienic Full AC Neat and Clean Rooms Avail. In Hotel 24 hours • Daily New Escorts Staff Available • Minimum to Maximum Range Available. Location;- Delhi, Gurgaon, NCR, Noida, and All Over in Delhi Hotel and Home Services HOTEL SERVICE AVAILABLE :-REDDISSON BLU,ITC WELCOM DWARKA,HOTEL-JW MERRIOTT,HOLIDAY INN MAHIPALPUR AIROCTY,CROWNE PLAZA OKHALA,EROSH NEHRU PLACE,SURYAA KALKAJI,CROWEN PLAZA ROHINI,SHERATON PAHARGANJ,THE AMBIENC,VIVANTA,SURAJKUND,ASHOKA CONTINENTAL , LEELA CHANKYAPURI,_ALL 3* 5* 7* STARTS HOTEL SERVICE BOOKING CALL Call WHATSAPP Call ☎+91–9953330565❤꧂ NIGHT SHORT TIME BOTH ARE AVAILABLE

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service9953056974 Low Rate Call Girls In Saket, Delhi NCR

Recently uploaded (20)

Digital Advertising Lecture for Advanced Digital & Social Media Strategy at U...

Digital Advertising Lecture for Advanced Digital & Social Media Strategy at U...

Call Girls Indiranagar Just Call 👗 9155563397 👗 Top Class Call Girl Service B...

Call Girls Indiranagar Just Call 👗 9155563397 👗 Top Class Call Girl Service B...

Call Girls Bommasandra Just Call 👗 7737669865 👗 Top Class Call Girl Service B...

Call Girls Bommasandra Just Call 👗 7737669865 👗 Top Class Call Girl Service B...

Vip Mumbai Call Girls Thane West Call On 9920725232 With Body to body massage...

Vip Mumbai Call Girls Thane West Call On 9920725232 With Body to body massage...

CHEAP Call Girls in Saket (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

CHEAP Call Girls in Saket (-DELHI )🔝 9953056974🔝(=)/CALL GIRLS SERVICE

➥🔝 7737669865 🔝▻ Mathura Call-girls in Women Seeking Men 🔝Mathura🔝 Escorts...

➥🔝 7737669865 🔝▻ Mathura Call-girls in Women Seeking Men 🔝Mathura🔝 Escorts...

Call Girls Bannerghatta Road Just Call 👗 7737669865 👗 Top Class Call Girl Ser...

Call Girls Bannerghatta Road Just Call 👗 7737669865 👗 Top Class Call Girl Ser...

Just Call Vip call girls Erode Escorts ☎️9352988975 Two shot with one girl (E...

Just Call Vip call girls Erode Escorts ☎️9352988975 Two shot with one girl (E...

👉 Amritsar Call Girl 👉📞 6367187148 👉📞 Just📲 Call Ruhi Call Girl Phone No Amri...

👉 Amritsar Call Girl 👉📞 6367187148 👉📞 Just📲 Call Ruhi Call Girl Phone No Amri...

Chintamani Call Girls: 🍓 7737669865 🍓 High Profile Model Escorts | Bangalore ...

Chintamani Call Girls: 🍓 7737669865 🍓 High Profile Model Escorts | Bangalore ...

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service

Call Girls In Shalimar Bagh ( Delhi) 9953330565 Escorts Service

Jual Obat Aborsi Surabaya ( Asli No.1 ) 085657271886 Obat Penggugur Kandungan...

Jual Obat Aborsi Surabaya ( Asli No.1 ) 085657271886 Obat Penggugur Kandungan...

➥🔝 7737669865 🔝▻ Dindigul Call-girls in Women Seeking Men 🔝Dindigul🔝 Escor...

➥🔝 7737669865 🔝▻ Dindigul Call-girls in Women Seeking Men 🔝Dindigul🔝 Escor...

Just Call Vip call girls kakinada Escorts ☎️9352988975 Two shot with one girl...

Just Call Vip call girls kakinada Escorts ☎️9352988975 Two shot with one girl...

Junnasandra Call Girls: 🍓 7737669865 🍓 High Profile Model Escorts | Bangalore...

Junnasandra Call Girls: 🍓 7737669865 🍓 High Profile Model Escorts | Bangalore...

Call Girls In Bellandur ☎ 7737669865 🥵 Book Your One night Stand

Call Girls In Bellandur ☎ 7737669865 🥵 Book Your One night Stand

Call Girls In Attibele ☎ 7737669865 🥵 Book Your One night Stand

Call Girls In Attibele ☎ 7737669865 🥵 Book Your One night Stand

➥🔝 7737669865 🔝▻ malwa Call-girls in Women Seeking Men 🔝malwa🔝 Escorts Ser...

➥🔝 7737669865 🔝▻ malwa Call-girls in Women Seeking Men 🔝malwa🔝 Escorts Ser...

Call Girls Hsr Layout Just Call 👗 7737669865 👗 Top Class Call Girl Service Ba...

Call Girls Hsr Layout Just Call 👗 7737669865 👗 Top Class Call Girl Service Ba...

Call Girls In Doddaballapur Road ☎ 7737669865 🥵 Book Your One night Stand

Call Girls In Doddaballapur Road ☎ 7737669865 🥵 Book Your One night Stand

OpenNMT

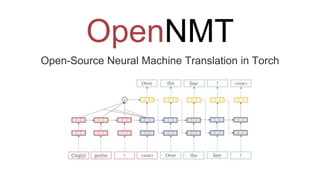

- 1. OpenNMT Open-Source Neural Machine Translation in Torch

- 2. What is OpenNMT? OpenNMT was originally developed by Yoon Kim and harvardnlp. Major source contributions and support come from SYSTRAN. Basically it is: “A Modularized Translation Program using Seq2Seq Attention Model”

- 3. Features of OpenNMT Simple general-purpose interface, requires only source/target files. Speed and memory optimizations for high-performance multi-GPU training. Includes a dependency-free C++ translator for model deployment. Latest research features to improve translation performance. Pretrained models available for several language pairs. Extensions to allow other sequence generation tasks such as summarization and image-to-text generation. Active open community welcoming both academic and industrial requests and contributions.

- 4. Model Encoder-Decoder LSTM The combination of context vector Compute the probability of the next token by computing affine transformation and softmax

- 7. Example Systran picked this phrase to show the superiority of their system. “I am officially running … for president of the United States, and we are going to make our country great again.” Notice the understanding the meaning of ‘running’ requires the context of the whole sentence. OpenNMT Demo: Korean - 저는 공식적으로 미국 대통령에 입후보하고 있으며, 우리는 우리 나라를 다시 위대하게 만들 것입니다. Chinese - 我是在正式竞选美国总统,我们将再次使我国伟大。 Google Translate: Korean - 나는 공식적으로 미국 대통령을 위해 달리고있다. 그리고 우리는 다시 우리나라를 위대하게 만들 것이다. Chinese - 我正式运行......为美国总统,我们将使我们的国家再次大。 Naver Translate: English to Korean - 나는 미국의 대통령을 공식적으로 운영하고 있으며, 우리 나라를 다시 위대하게 만들 것이다. Korean to English - I am officially running for president of the United States, and we will make our country great again.

- 8. Noticed it is not too difficult to write OpenNMT clone in Python if you use Tensorflow. Many of the functionalities in OpenNMT are already implemented in Tensorflow. Plan 1: Modularize the Tensorflow Seq2Seq example and add some convenient tools. Plan 2: Make this as a console application. As a new side project, we plan to publish this project by the end of the winter break (Jan 23) Contribute to OpenNMT? or publish a new Open Source? https://github.com/keonkim/OpenNMT OpenNMT in Python

Editor's Notes

- We propose two encoder-decoder architectures for this task. Our word-based architecture (WORD) is similar to that of Luong et al. (2015). Context vector The context vector is combined with the decoder hidden state through a one layer MLP (yellow), after which an affine transformation followed by a softmax is applied to obtain a distribution over the next word/tag Here, we model the probability of the target given the source, p(t | s), with an encoder neural network that summarizes the source sequence and a decoder neural network that generates a distribution over the target words and tags at each step given the source.

- FeaturesEmbedding --[[ A nngraph unit that maps features ids to embeddings. When using multiple features this can be the concatenation or the sum of each individual embedding. ]] FeaturesGenerator --[[ Feature decoder generator. Given RNN state, produce categorical distribution over tokens and features. Implements $$[softmax(W^1 h + b^1), softmax(W^2 h + b^2), ..., softmax(W^n h + b^n)] $$. --]] GLobalAttention The current target hidden state h_j is combined with each of the memory vectors in the source to produce attention weights. --[[ Global attention takes a matrix and a query vector. It then computes a parameterized convex combination of the matrix based on the input query to computer context vector. H_1 H_2 H_3 ... H_n q q q q | | | | \ | | / ..... \ | / a Constructs a unit mapping: $$(H_1 .. H_n, q) => (a)$$ Where H is of `batch x n x dim` and q is of `batch x dim`. The full function is $$\tanh(W_2 [(softmax((W_1 q + b_1) H) H), q] + b_2)$$. --]]