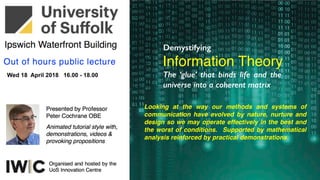

Demystifying Information Theory

- 1. Looking at the way our methods and systems of communication have evolved by nature, nurture and design so we may operate effectively in the best and the worst of conditions. Supported by mathematical analysis reinforced by practical demonstrations Demystifying Information Theory The ‘glue’ that binds life and the universe into a coherent matrix

- 2. I N F O R M A T I O N T H E O R Y Origin: Claude E. Shannon in 1948 "A Mathematical Theory of Communication” Genetic: Lossless (Zip)/Lossy(MP3) data compression/coding for info transmission/ storage Extended: Linguistics, human perception, and the understanding of black holes ++ Entropy: Quantifies the amount of uncertainty involved in any form of information Measures: Relative entropy, mutual information, channel capacity, bandwidth, S/N, bit errors Intersections: Maths, statistics, computer science, electronics, physics, chemistry, neurobiology Applicability: Statistical inference, natural language processing, cryptography, neurobiology, evolution/function of molecular codes, thermal physics, quantum computing, linguistics, plagiarism detection, pattern recognition, anomaly detection, source coding, channel coding, algorithmic complexity theory, cosmology, security, +++ all forms of communications from electronic, optical, paper, biological +++ Quantification, data storage, and communication

- 3. Communication I N F O R M A T I O N Actions Gestures Facial Expression Body Langage Pheromones Grunts - Noises Spoken Language Shouting Waving Fires Smoke Flags Drums Horns Mirrors Runners Riders Pigeons Semaphore Storage Paintings Carvings Stories Songs Dance Drama Talley Sticks Clay Tablets Animal Skins Bamboo Strips Paper Books Libraries Mechanisms Speed/content limited; most suited to localised applications in largely c o n t a i n e d s o c i e t i e s w i t h l i t t l e movement of goods and people….best thought of as ‘human scale’ systems 480 × 432 - sunreed.com

- 4. Computing Power G R 0 W T H 216 × 162 - sphinxbazaar.com 480 × 432 - sunreed.com E x p o n e n t i a l l y m o r e p r o c e s s i n g p o w e r, ( a n d m o r e r e c e n t l y ) i n t e l l i g e n c e , f o r e x p o n e n t i a l l y l e s s : c o s t , p h y s i c a l s p a c e , e n e r g y , m a t e r i a l … f u n d a m e n t a l l y i m p o s s i b l e w i t h o u t a s o l i d u n d e r s t a n d i n g o f i n f o t h e o r y

- 5. D a t a S t o r a g e 480 × 432 - sunreed.com G R 0 W T H E x p o n e n t i a l l y m o r e d a t a c r e a t e d a n d s t o r e d f o r e x p o n e n t i a l l y l e s s : c o s t , p h y s i c a l s p a c e , e n e r g y , m a t e r i a l … a l l f u n d a m e n t a l l y i m p o s s i b l e w i t h o u t a s o l i d u n d e r s t a n d i n g o f i n f o r m a t i o n ( t h e o r y )

- 6. Communication G R 0 W T H E x p o n e n t i a l l y m o r e d e v i c e s a n d p r o d u c t s o n - l i n e f o r e x p o n e n t i a l l y l e s s / i t e m : c o s t , p h y s i c a l s p a c e , e n e r g y , m a t e r i a l … a l l g e n e r a t i n g m o r e c o n n e c t i o n s a n d t r a f f i c … f u n d a m e n t a l l y i m p o s s i b l e w i t h o u t i n f o r m a t i o n t h e o r y

- 7. The ~10m limit of humans I N F O R M A T I O N Conversation - Eye Contact Facial Expressions - Pheromones Body Language - Privacy At about 1m all forms of human communication work extremely well

- 8. The ~10m limit of humans I N F O R M A T I O N As we physically move apart all forms of human communication progressively degrade and we lose clarity and privacy Our technologies have only recently (~100 years)closed this gap across the planet The information storage story is very similar - from stories, song and dance to IT over 1000s of years - IT ~ 50 years

- 9. V e r y e a r l y i n s i g h t i n t o i n f o r m a t i o n “ T h e m o s t i m p o r t a n t m e s s a g e i s t h e l e a s t e x p e c t e d o n e ” Petronius (c. 27 – 66 AD) was a Roman writer of the Neronian age; a noted satirist. He is identified with C. Petronius Arbiter, but the manuscript text of the Satyricon calls himTitus Petronius. Satyricon is his sole surviving work. First quoted at me when I was a student in the 1970s but I have never been able to validate, but just like Confucius, Arbiter is credited with hundreds of undocumented quotes of this nature. P E T R O N I U S A R B I T E R

- 10. Victory/Nelson/Trafalgar 1805 England expects every man to do his duty At sea the ships are rolling, the wind is blowing, it may be raining, sleeting, snowing, and it might be dull, overcast, stormy, or night time! Very limited message sets Slow rate of communication High probability of error Can be read by the enemy ? Needs coding for secrecy British Navy 1653 & on S E M A P H O R E

- 11. S E M A P H O R E Chappe - Napoleonic War - 1791 England expects every man to do his duty Lille to Paris communication with towers every 12 - 25 km about an hour for 150km provided all the operators were alert/awake/not indisposed! ~10 words/minute Wide ranger of message sets Slow rate of communication High probability of error Can be read by the enemy ? Needs coding for secrecy 820 × 540 - commons.wikimedia.org 170 × 231 - en.wikipedia.org 1790 & on

- 12. S E M A P H O R E Surrey - Napoleonic War - 1822 England expects every man to do his duty Wide ranger of message sets Slow rate of communication High probability of error Can be read by the enemy ? Needs coding for secrecy Portsmouth to London comms with towers every 12 - 25 km about an hour for 150km provided all the operators were alert/awake/not indisposed! ~10 words/minute 1790 & on

- 13. S E M A P H O R E Modern times USA and UK engineers combined the heliotrope surveying instrument (1821 Carl Friedrich Gauss) and Morse code for signalling. Henry C. Mance, working for UK government’s Persian Gulf Telegraph Department in Karachi, perfected (1869) the wireless communications apparatus he dubbed the heliograph. In 1875 Mance’s device was approved for use by the British-Indian Army. ~20 words/minute HelioGraph - Ancient China - Modern Wars ~1822

- 14. R A I L W A Y S Changed everything T h e h i g h s p e e d transportation of people and goods a t a n i n h u m a n s p e e d a n d s c a l e demanded faster i n f o r m a t i o n transport Railway Networks demanded faster telecommunications than all train traffic Nationwide standard time was required for the first time in mankind’s history Timetables Safety Signalling Scheduling Control Orchestration

- 15. T E L E G R A P H Saw a dash dot bubble 1837 & on

- 16. T E L E G R A P H I N V E N T E D Railways = new industry Standardised, and very simple signalling format used to ensure high efficiency and a low error rate

- 17. A collaboration resulting in an iconic result Vail refined the prototype produced by Morse, who retained all the patents. The ‘story’ is that; by visiting a printers and counting the number off occurrences of each letter ‘e’ was found to be dominant and so was assigned the smallest element - one dot; ‘i’ was next and was assigned two dots, and then ‘t’ with dash…and so on. M O R S G E / V A I L C O D E

- 18. A collaboration resulting in an iconic result The efficiency of the symbol and code relationship can be gaged from the above tree with less signal space used for the most common letters and most allocated to the rarest incidences Q, Y, Z M O R S G E / V A I L C O D E . .. … …. - -- --- -..- -- -.--..

- 19. Extended to other languages/character sets All other language adaptations lead to significant inefficiencies ! M O R S G E / V A I L C O D E Chinese Russian Japanese

- 20. A path resulting in a transformative result C H A R A C T E R S E T S A n e v o l u t i o n t o w a r d f l e x i b i l i t y d i s a m b i g u a t i o n , a d a p t a b i l i t y , r e d u n d a n c y , reasonable efficiency, clarity, g l o b a l s t a n d a r d i s a t i o n o f c h a r a c t e r f o r m a t , s p e l l i n g and phraseology

- 21. The transmission, processing, extraction, and utilisation of information I love thee to the depth and breadth and height My soul can reach 106783428576210048898337213498501 R E D U D A N C Y In character sets & fonts

- 22. R E D U D A N C Y Readable with missing bits O ce m re unto th bre h, dear frie s, once m re; Or close the wa l up ith our En sh dead! In peace the 's nothing so beco s a an, As modest stilln s and h ility; B t when he bla t of war b ows in our ears, Then … Once more unto the breach, dear friends, once more; Or close the wall up with our English dead! In peace there's nothing so becomes a man, As modest stillness and humility; But when the blast of war blows in our ears, Then…

- 23. R E D U D A N C Y Purposely shortened TXT How r u 2day Want 2 go 4 a drink @ 8 2nigt Get a curry 2 ?

- 24. R E D U D A N C Y Image Paradox and More

- 25. R E D U D A N C Y Image Paradox and More

- 26. R E D U D A N C Y Image Paradox and More

- 27. R E D U D A N C Y Image Paradox and More

- 28. R E D U D A N C Y Image Paradox and More

- 29. R E D U D A N C Y Image Paradox and More

- 30. R E D U D A N C Y Image Paradox and More

- 31. R E D U D A N C Y Image Paradox and More

- 32. R E D U D A N C Y Image Paradox and More

- 33. R E D U D A N C Y Image Paradox and More

- 34. R E D U D A N C Y Image Paradox and More

- 35. R E D U D A N C Y Image Paradox and More

- 36. R E D U D A N C Y Image Paradox and More

- 37. R E D U D A N C Y Image Paradox and More

- 38. R E D U D A N C Y Image Paradox and More

- 39. T E L E G R A P H Connecting every empire

- 40. T E L E G R A P H T i t a n i c 1 9 1 2 w i re l e s s

- 41. T E L E G R A P H W W I I M i l i t a r y - N a va l F re q u e n c y s e l e c t i ve f a d i n g T h e r m a l + s p e c t ra l n o i s e L o w l e ve l i n t e r f e re n c e

- 42. T E L E G R A P H WWII Telex in use ~1986 D e v e l o p e d t o m e e t t h e n e e d f o r s p e e d + m o re a c c u ra c y + m o re a n d m o re t ra f f i c

- 43. M o d e r n e r a B L I N K I N G Admiral Jeremiah Denton Blinks T-O-R-T-U-R-E using Morse Code as P.O.W. S E M A P H O R E

- 44. M o d e r n e ra A l d i s L a m p WW1 & WWII Ship - Ship Ship - Shore Aircraft - Aircraft Ship - Aircraft +++ Still in use S E M A P H O R E

- 45. C o m m e r c i a l A i r l i n e r - ATC S P E E C H R A D I O F o r m a l i s e d o p e ra t i o n s / p ro c e d u re s a n d m e s s a g e s e t s c o m p e n s a t e f o r p o o r s i g n a l s t re n g t h , i n t e r f e re n c e n o i s e , a n d t i re d n e s s + + M e s s a g e s a re g e n e ra l l y b o u n d e d a n d m o s t i m p o r t a n t l y e x p e c t e d + +

- 46. B i r d S t r i k e E m e r g e n c y S P E E C H R A D I O C a p t a i n C h e s l e y S u l l e n b u r g e r l a n d s C a c t u s 1 5 4 9 i n t h e N Y H u d s o n R i ve r 1 5 / 0 1 / 0 9 Re a l c o c k p i t t a p e s - f l i g h t s i m u l a t i o n

- 47. M i l i t a r y A i r c r a f t C o m b a t S P E E C H R A D I O

- 48. M i l i t a r y H F S S B C h a n n e l S P E E C H R A D I O

- 49. In the hierarchy to wisdom Information theory studies the transmission, processing, extraction & utilisation of information. The case of communications over a noisy channel first detailed in the 1948 paper by Claude Shannon C = B.T. log2(1+ S/N) Wisdom Knowledge Information Data Noise Human activity: Government, Services Commerce, Industry, Farming, Society, Education, Research, Development Continually analysed, filtered, rationalised, published Created by all human/machine/ network activities 365 x 24 Meaning and implications abstracted Making the best decisions possible based on all our accumulated knowledge models & experiences I N F O R M A T I O N

- 50. Describe, define & quantify I N F O R M A T I O N Bits Bytes Bauds Signal Noise Coding Storage Decoding Bandwidth Processing Encryption Decryption Interference Transmission +++++ Bits Bytes Bauds Signal Noise Coding Bandwidth Transmission

- 51. Describe, define & quantify I N F O R M A T I O N Two States = 1; 0Bits = Binary Digits = Bytes = Collection of Bits = Typically an 8 Bit Byte Bauds = Signalling element rate = or = Bit Rate For reasons associated with matching the transmission channel / medium and/or dealing with noise and/or sources of interfer

- 52. I N F O R M A T I O N Claude Shannon Original Claude Elwood Shannon 1916 – 2001) mathematician, electrical engineer, and cryptographer at Bell Labs Work on cryptography 1940 to 1945 - to prove SIGSALY coding linking the U.S. President with Winston Churchill by HF radio-telephone could not be broken Founded information theory during the same period with a landmark paper, A Mathematical Theory of Communication, 1948

- 53. E N T R 0 P Y A measure of order Order implies less information Disorder implies a lot of information Expected messages implies less information Unexpected messages implies more information Less Ordered Greater Entropy More Information More Ordered Lower Entropy Less Information

- 54. The basic of what we mean I N F O R M A T I O N y x

- 55. The basics of what we mean I N F O R M A T I O N y x Information = x;y;white

- 56. The basic of what we mean I N F O R M A T I O N y x Information = x;y;white; (dot colour, size, location)

- 57. The basic of what we mean I N F O R M A T I O N y x Information = x;y;white; (dots, colours, sizes, locations)

- 58. The basic of what we mean I N F O R M A T I O N y x Info= x;y;colourgrad; (shapes, colours, sizes, locations)

- 59. Fundamental relationship I N F O R M A T I O N Complexity Information Units ?? Units ?? What are the Law(s) and/or relationship(s) defining the shape of this line? Information = f(complexity)

- 60. Channel Copper Wire Optical Fibre Wireless Receiver Demodulation Decoding Error Correction Transmitter Coding Modulation Degraders Noise Interference Channel Variations Reflections Describe, define & quantify I N F O R M A T I O N Sent Bit Stream Recovered Bit Stream Signal Distorted Signal Transmission information movement A B Signal + Noise S/N Ratio Error Rate ???? Error Rate ???? Spectrum of Stream

- 61. Dictionary definition - basic I N F O R M A T I O N noun [mass noun] 1 facts provided or learned about something or someone: a vital piece of information. • [count noun] Law a charge lodged with a magistrates' court: the tenant may lay an information against his landlord. 2 what is conveyed or represented by a particular arrangement or sequence of things: genetically transmitted information. • Computing data as processed, stored, or transmitted by a computer. • (in information theory) a mathematical quantity expressing the probability of occurrence of a particular sequence of symbols, impulses, etc., as against that of alternative sequences. ORIGIN late Middle English (also in the sense ‘formation of the mind, teaching’), via Old French from Latin informatio(n-), from the verb informare (see inform). T h i s i s t h e ke r n e l o f w h a t we h a ve t o u n d e r s t a n d a n d w h y w e n e e d a t h e o r y a n d d i m e n s i o n e d m o d e l

- 62. Describe, define & quantify I N F O R M A T I O N Storage Magnetic Optical Thermal Decoding Error Correction Coding Modulation Degraders Noise Interference Natural Decay Data In Recovered Data OutSignal Distorted Signal Conditioning information storage A B Error Rate ????

- 63. Describe, define & quantify I N F O R M A T I O N Channel Copper Wire Optical Fibre Wireless Receiver Demodulation Decoding Error Correction Transmitter Coding Modulation Degraders Noise Interference Channel Variations Reflections Bit Stream In Recovered Bit StreamSignal Distorted Signal Transmission information movement A B Signal + Noise S/N Ratio Error Rate ???? Error Rate ????

- 64. Of an Info Source E N T R O P Y All information theory analysis assumes binary (Base 2) as the foundation level and is then expanded to the more complex Ternary…’M’ary telecom, computing and storage up to and including analogue systems such as AM, FM, SSB wireless, all forms of imagery, languages including atomic interactions. Based on the probability mass function* of each symbol, the Shannon Entropy (bits/symbol) is given by: *PMF gives the probability that a discrete random variable is exactly = to some value: it is a primary means of defining a discrete probability distribution PMF differs from a probability density function (pdf) which is associated with continuous rather than discrete random variables; the probability density function must be integrated over an interval to yield a probability Where = the occurrence probability of the -th symbol

- 65. Information Source E N T R O P Y The entropy of a discrete random bit stream (or discrete variable X) is a measure of the uncertainty when only its distribution is known: It is maximised when all bits are equiprobable: p(x) = 1/n; giving H(X) = log(n) where = probability of a head; and = probability of a tail An easily digested example is the tossing of a fair coin: Then: Notice that -log (p) = log (1/p) and by convention Limit -p.log(p) = 0 as p approaches 0

- 66. Information Source E N T R O P Y The entropy of a discrete random bit stream (or discrete variable X) is a measure of the uncertainty when only its distribution is known: It is maximised when all bits are equiprobable: p(x) = 1/n; giving H(X) = log(n) where = probability of a head; and = probability of a tail An easily digested example is the tossing of a fair coin: Then: Notice that -log (p) = log (1/p) and by convention Limit -p.log(p) = 0 as p approaches 0

- 67. Information Source E N T R O P Y Plotting the entropy of a binary case with a range of bit probabilities using this formula: Coin with Tails on both sides …illustrates the importance of = 1/2 Another way of looking at this would be gambling with biased coins: Coin with Heads on both sidesFair Coin

- 68. Mutual Information E N T R O P Y A measure of the information obtained about one signal by observing another Used to maximise the information shared between transmitted and received signals The mutual information of X relative to Y is given by: This is an important property in the fields of complex modulation, coding and encryption

- 69. T e l e c o m m u n i c a t i o n I N F O R M A T I O N 1138 × 1364 - atlantic-cable.com A p r i m a r y d r i v e r > 1 0 0 y e a r s o v e r t a k e n b y c o m p u t i n g , A I r o b o t i c s , b i o l o g y . . m a t e r i a l s

- 70. How many bit/s ?? C A P I C I T Y Channel Copper Wire Optical Fibre Wireless Receiver Demodulation Decoding Error Correction Transmitter Coding Modulation Degraders Noise Interference Channel Variations Reflections Bit Stream In Recovered Bit StreamSignal Distorted Signal Transmission information movement A B Signal + Noise S/N Ratio Error Rate ???? Error Rate ????

- 71. If it is wrong D E M O I

- 72. L o s s y c o d i n g D E M O 2

- 73. L o w / N o S i g n a l D E M O 3

- 74. S/ N Analogue D E M O 4 L o g O u t G OTO L I V E A P P S 1

- 75. Transmission/Storage upper bound S H A N N O N L I M I T The Shannon - Hartley Law was not originally derived as detailed below; they took a far more probabilistic and complex path. For our ease of understanding we assume an easier/engineering approach/route that definitely upsets purists! Let us assume some time series analogue electrical signal accompanied by random noise in the form: Signal = vs(t) and Noise = vn(t) In power terms this becomes: Signal Power = Vs/R = S and Noise Power = Vn/R = N2 2 Where: V= rms value of v; and R = a dissipating resistive load

- 76. Transmission/Storage upper bound S H A N N O N L I M I T It is also assumed that the: Signal = vs(t) and Noise = vn(t) are statically independent - ie knowing something about one gives you no idea about the other - they are mathematically orthogonal. We now assume a quantisation if the signal into symbols or numbers based on the relative rms values such that: Which, with a little mathematical juggling becomes:

- 77. Transmission/Storage upper bound S H A N N O N L I M I T It is also assumed that the: Signal = vs(t) and Noise = vn(t) are statically independent - ie knowing something about one gives you no idea about the other - they are mathematically orthogonal. We now assume a quantisation if the signal into symbols or numbers based on the relative rms values such that: Which, with a little mathematical juggling becomes:

- 78. Transmission/Storage upper bound S H A N N O N L I M I T Rearranging further, this becomes: If we now make M - b Bit measurements in a time T, the amount of information bits collected will be : Now the Sampling Theorem tells us that the highest practical rate M/T is:

- 79. Transmission/Storage upper bound S H A N N O N L I M I T And just a little more rearranging gets us to the ‘bottom line’: This is the fastest rate information (an upper bound) that can be transmitted over a given channel So the maximum amount of information that can be transported in a given time T over this channel is: I = B.T bits/second bits

- 80. S/N dB BW Hz Duration T seconds I = B.T log2(1 + k.S/N) I ~ B.T.K.S/NdB vv Transmission/Storage upper bound S H A N N O N L I M I T

- 81. The same information t r a n s m i t t e d o v e r a channel in three different modes using S/N, BW and T as variable factors S/N dB Duration T seconds BW Hz Transmission/Storage upper bound S H A N N O N L I M I T

- 82. The same information t r a n s m i t t e d o v e r a channel in three different modes using S/N, BW and T as variable factors Transmission/Storage noise impact E R R O R R A T E - S / N Suppose we transmit a binary signal of two voltage levels The received signal will have picked up thermal and other forms of noise y(t) = the bit stream n(t) = the additive noise

- 83. Transmission/Storage noise impact E R R O R R A T E - S / N Suppose we transmit a binary signal represented by two voltage levels The received signal will have picked up thermal and other forms of noise y(t) = the bit stream n(t) = the additive noise

- 84. Transmission/Storage noise impact E R R O R R A T E - S / N P1 = 0.5 P0 = 0.50 1 0 1 P1 = ? P0 = ?

- 85. Transmission/Storage noise impact E R R O R R A T E - S / N

- 86. ≠ E R R O R S Decisions

- 87. E R R O R S Decisions

- 88. E R R O R S Decisions

- 89. E R R O R S Decisions

- 90. Upper bound/Limit S H R N N N O N

- 91. A London cenral city location R E A L I T Y W I F I T seconds S + N Power B = 22MHz

- 92. S/ N Analogue D E M O 4 L o g O u t G OTO L I V E A P P S 2

- 93. Any further questions cochrane.org.uk or thoughts ??