Check(mate) Your Bias: A game-driven approach to educating your team about bias

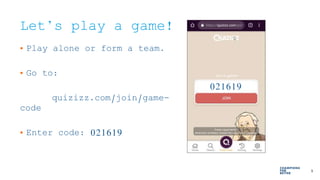

- 1. 1 © 2018 Express Scripts. All Rights Reserved. Let’s play a game! • Play alone or form a team. • Go to: quizizz.com/join/game- code • Enter code: 021619 021619

- 2. 2 © 2018 Express Scripts. All Rights Reserved. Thanks for playing! We’ll take a look at the results later…

- 3. Check(mate) Your Bias A games-driven approach Joe Johnson and Caron Garstka UXPA 2019

- 4. 4 © 2018 Express Scripts. All Rights Reserved. JOE JOHNSON LEAD UX ENGINEER CARON GARSTKA SR. UX RESEARCHER WHO?

- 5. 5 © 2018 Express Scripts. All Rights Reserved. Agenda 1 / WHY 2 / WORK 3 / GAMES 4 / PLAY 5 / NEXT

- 6. 6 © 2018 Express Scripts. All Rights Reserved. 6 WHY

- 7. 7 © 2018 Express Scripts. All Rights Reserved. WE ARE ALL HUMAN. (IF NOT, THIS TALK ISN’T FOR YOU.) WHY

- 8. 8 © 2018 Express Scripts. All Rights Reserved. COGNITIVE BIAS EFFECTS HUMANS.WHY

- 9. 9 © 2018 Express Scripts. All Rights Reserved. What is cognitive bias? Systematic error in thinking that affects the decisions and judgments A cognitive tool Part of decision making Can be beneficial and useful Can distort our interpretation of the world

- 10. 10 © 2018 Express Scripts. All Rights Reserved.

- 11. 11 © 2018 Express Scripts. All Rights Reserved. 1. WE ARE HUMAN… 2. HUMANS HAVE BIAS… 3. BIAS COLORS OUR WORLD… BECAUSE

- 12. 12 © 2018 Express Scripts. All Rights Reserved. 12 WORK

- 13. 13 © 2018 Express Scripts. All Rights Reserved. WE NEED TO RECOGNIZE OUR BIAS TO EFFECTIVELY DO OUR JOBS.

- 14. 14 © 2018 Express Scripts. All Rights Reserved. WHEN YOU KNOW BETTER, YOU DO BETTER.

- 15. 15 © 2018 Express Scripts. All Rights Reserved. BIAS IS HARD TO TALK ABOUT.

- 16. 16 © 2018 Express Scripts. All Rights Reserved. HOW DO WE MAKE IT APPROACHABLE?

- 17. 17 © 2018 Express Scripts. All Rights Reserved. GAMES!

- 18. 18 © 2018 Express Scripts. All Rights Reserved. Games… • An engaging learning tool • Non-threatening • Experiential • Emotional investment

- 19. 19 © 2018 Express Scripts. All Rights Reserved. GAMES HELP US BETTER RECOGNIZE BIAS IN ACTION. AWARENESS IS THE FIRST STEP TOWARD CHANGE.

- 20. 20 © 2018 Express Scripts. All Rights Reserved. 20 TIME FOR A GAME: THREE NUMBERS

- 21. 21 © 2018 Express Scripts. All Rights Reserved. I’M GOING TO SHOW YOU THREE NUMBERS.

- 22. 22 © 2018 Express Scripts. All Rights Reserved. I’M GOING TO SHOW YOU THREE NUMBERS. THESE NUMBERS FOLLOW A RULE I HAVE IN MY HEAD.

- 23. 23 © 2018 Express Scripts. All Rights Reserved. YOUR ULTIMATE GOAL IS TO GUESS MY RULE.

- 24. 24 © 2018 Express Scripts. All Rights Reserved. TO GET INFO ABOUT MY RULE, YOU’LL GIVE ME THREE NUMBERS YOU THINK FIT THE RULE.

- 25. 25 © 2018 Express Scripts. All Rights Reserved. I’LL TELL YOU IF YOUR THREE NUMBERS FIT THE RULE.

- 26. 26 © 2018 Express Scripts. All Rights Reserved. READY?

- 27. 27 © 2018 Express Scripts. All Rights Reserved. THE NUMBERS ARE: 2, 4, 8 THINK ABOUT THE RULE… (DON’T SAY IT!)

- 28. 28 © 2018 Express Scripts. All Rights Reserved. THE NUMBERS ARE: 2, 4, 8 GIVE THREE NUMBERS YOU THINK FIT THE RULE. (I’LL TELL IF THEY FIT THE RULE.)

- 29. 29 © 2018 Express Scripts. All Rights Reserved. LET’S DISCUSS…

- 30. 30 © 2018 Express Scripts. All Rights Reserved. Anchoring Bias The tendency to rely too heavily on an initial piece of information.

- 31. 31 © 2018 Express Scripts. All Rights Reserved. Complexity Bias The tendency to believe that complex solutions are better than simple ones.

- 32. 32 © 2018 Express Scripts. All Rights Reserved. Confirmation Bias The tendency to find or use evidence in a way that confirms preexisting beliefs.

- 33. 33 © 2018 Express Scripts. All Rights Reserved. • Takeaway 2: K.I.S.S. (Keep it simple, silly) • Takeaway 3: Point and counter point

- 34. 34 © 2018 Express Scripts. All Rights Reserved. 34 TIME FOR ANOTHER GAME: GROUP ‘EM UP

- 35. 35 © 2018 Express Scripts. All Rights Reserved. LET’S SAY YOU HAVE A CROWD OF PEOPLE.

- 36. 36 © 2018 Express Scripts. All Rights Reserved. EACH PERSON IS WEARING A BADGE.

- 37. 37 © 2018 Express Scripts. All Rights Reserved. BADGES CAN VARY BY SHAPE, COLOR, AND SIZE.

- 38. 38 © 2018 Express Scripts. All Rights Reserved.

- 39. 39 © 2018 Express Scripts. All Rights Reserved. YOUR TASK IS TO FORM GROUPS.

- 40. 40 © 2018 Express Scripts. All Rights Reserved. NOW FORM NEW GROUPS.

- 41. 41 © 2018 Express Scripts. All Rights Reserved. ONE FINAL ROUND OF GROUPS

- 42. 42 © 2018 Express Scripts. All Rights Reserved. THIS TIME, INCLUDE YOURSELF.

- 43. 43 © 2018 Express Scripts. All Rights Reserved. LET’S DISCUSS…

- 44. 44 © 2018 Express Scripts. All Rights Reserved. Framing Effect When people make different choices based on how a decision is presented or interpreted.

- 45. 45 © 2018 Express Scripts. All Rights Reserved. Availability Heuristic The tendency to rely on immediate examples that come to mind when evaluating a decision.

- 46. 46 © 2018 Express Scripts. All Rights Reserved. Affinity Bias The unconscious tendency to get along with others who are like us.

- 47. 47 © 2018 Express Scripts. All Rights Reserved. • Takeaway 1: Lift the veil, see beyond • Takeaway 2: What am I m_ss_ing? • Takeaway 3: Birds of a feather, dissent together

- 48. 48 © 2018 Express Scripts. All Rights Reserved. 48 LAST GAME: LEG’GO OF MY LEGO

- 49. 49 © 2018 Express Scripts. All Rights Reserved. LET’S SEE HOW THE LEGO SET IS SHAPING UP…

- 50. 50 © 2018 Express Scripts. All Rights Reserved. NOW I NEED A SECOND VOLUNTEER.

- 51. 51 © 2018 Express Scripts. All Rights Reserved. HERE ARE THE RULES…

- 52. 52 © 2018 Express Scripts. All Rights Reserved. YOU’LL NAME THE HIGHEST PRICE YOU’RE WILLING TO PAY FOR THE LEGO SETS.

- 53. 53 © 2018 Express Scripts. All Rights Reserved. I’VE PICKED A PRICE THAT REPRESENTS THE VALUE OF EACH SET. (I’LL REVEAL IT LATER.)

- 54. 54 © 2018 Express Scripts. All Rights Reserved. IF YOUR PRICE IS EQUAL TO OR ABOVE MY PRICE, YOU’LL GET A PRIZE.

- 55. 55 © 2018 Express Scripts. All Rights Reserved. IF YOUR PRICE IS BELOW MY PRICE, YOU’LL WALK AWAY WITH NOTHING.

- 56. 56 © 2018 Express Scripts. All Rights Reserved. YOU’LL EACH GIVE A PRICE FOR YOUR LEGO SET AND YOUR PARTNER’S.

- 57. 57 © 2018 Express Scripts. All Rights Reserved. READY?

- 58. 58 © 2018 Express Scripts. All Rights Reserved. WRITE DOWN THE HIGHEST PRICE YOU’RE WILLING TO PAY FOR YOUR LEGO CREATION.

- 59. 59 © 2018 Express Scripts. All Rights Reserved. WRITE DOWN THE HIGHEST PRICE YOU’RE WILLING TO PAY FOR YOUR PARTNER’S LEGO CREATION.

- 60. 60 © 2018 Express Scripts. All Rights Reserved. WHAT DID YOU BID?

- 61. 61 © 2018 Express Scripts. All Rights Reserved. NOW TIME FOR THE BIG REVEAL…

- 62. 62 © 2018 Express Scripts. All Rights Reserved. $10.79!

- 63. 63 © 2018 Express Scripts. All Rights Reserved. LET’S DISCUSS…

- 64. 64 © 2018 Express Scripts. All Rights Reserved. IKEA Effect The tendency to place a disproportionately high value on the things you have helped create.

- 65. 65 © 2018 Express Scripts. All Rights Reserved. Sunk Cost Fallacy Tendency to make future decisions based on how much effort or resources have been put in previously.

- 66. 66 © 2018 Express Scripts. All Rights Reserved. • Takeaway 1: If you build it… • Takeaway 2: Let it go

- 67. 67 © 2018 Express Scripts. All Rights Reserved. 67 BIAS CHECKER QUIZ REVIEW

- 68. 68 © 2018 Express Scripts. All Rights Reserved. LET’S REVISIT THE QUIZ

- 69. 69 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn Answer (1) or (3) could indicate the Sunk Cost Fallacy at work. Depends on your reasons for keeping it!

- 70. 70 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn “Yes” indicates a Confirmation Bias or Affinity Bias.

- 71. 71 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn (1) may indicate Affinity Bias. (3) may be the best answer, where a diversity of views contributes to a rich creative process.

- 72. 72 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn “Yes” indicates the Sunk Cost Fallacy.

- 73. 73 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn (3) “Both” would be the balanced approach to avoid Confirmation Bias.

- 74. 74 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn “Yes” may indicate an Availability Heuristic or an Anchoring Bias. Also the first idea might not be simple - think of Complexity Bias and the 2-4-8 game.

- 75. 75 © 2018 Express Scripts. All Rights Reserved. HOW MANY OF YOU WANT TO CHANGE YOUR ANSWERS?

- 76. 76 © 2018 Express Scripts. All Rights Reserved. 76 CONCLUSION

- 77. 77 © 2018 Express Scripts. All Rights Reserved. GAME PLAY TO SHAPE EMPATHIC + DATA-DRIVEN CULTURE.

- 78. 78 © 2018 Express Scripts. All Rights Reserved. THIS IS WHAT IT COULD LOOK LIKE IN THE REAL WORLD…

- 79. 79 © 2018 Express Scripts. All Rights Reserved. NEXT • Rinse and repeat • Be open • Be kind • Game on!

- 80. 80 © 2018 Express Scripts. All Rights Reserved. QUESTIONS?

- 81. 81 © 2018 Express Scripts. All Rights Reserved. Contact Us: Joe Johnson Joe_johnson@express-scripts.com Caron Garstka cegarstka@express-scripts.com

- 82. 82 © 2018 Express Scripts. All Rights Reserved. APPENDIX

- 83. 83 © 2018 Express Scripts. All Rights Reserved. Cited resources These resources were directly incorporated in the presentation. • H. Arkes & C. Blumer. “The psychology of sunk costs.” Organizational Behavior and Human Decision Processes. (1985) 35, 124- 140. • B. Benson. “Cognitive bias cheat sheet.” (2016): https://betterhumans.coach.me/cognitive-bias-cheat-sheet-55a472476b18 • J. Beshears, S. Frederick, and F. Gino “Test Yourself: Are You Being Tricked by Intuition?” Harvard Business Review. (2015): https://hbr.org/2015/04/test-yourself-are-you-being-tricked-by-intuition • T. Bradberry. “13 Cognitive Biases That Really Screw Things Up For You.” (2018): https://www.linkedin.com/pulse/13-cognitive- biases-really-screw-things-up-you-dr-travis-bradberry/ • S. Fowler. “Intercultural Simulation Games: A Review (of the United States and Beyond).” International Simulation and Gaming Association. (2010): https://doi.org/10.1177/1046878109352204 • M. Funchess. “Implicit Bias -- how it effects us and how we push through.” TEDxFlourCity. (2014): https://www.youtube.com/watch?v=Fr8G7MtRNlk • E. Hall. “The 9 Rules of Design Research.” (2018): https://medium.com/mule-design/the-9-rules-of-design-research-1a273fdd1d3b • D. Kahneman. Thinking, Fast and Slow. (2011) • T. Keereepart. “3 design principles to help us overcome everyday bias.” TEDxPortland. (2016): https://www.youtube.com/watch?v=K6dstCUWsFY • R. F. Mackay. “Playing to learn: Panelists at Stanford discussion say using games as an educational tool provides opportunities for deeper learning.” (2013): https://news.stanford.edu/2013/03/01/games-education-tool-030113/ 0

- 84. 84 © 2018 Express Scripts. All Rights Reserved. Cited resources These resources were directly incorporated in the presentation. • F. Menzies. “ ‘A-Ha’ Activities for Unconscious Bias Training.” (2018): https://cultureplusconsulting.com/2018/08/16/a-ha-activities- for-unconscious-bias-training/ • M. I. Norton. “The Ikea Effect: When Labor Leads to Love.” (2012): http://nrs.harvard.edu/urn-3:HUL.InstRepos:12136084 • K. Pressner. ”Are you biased? I am.” TEDxBasel. (2016): https://www.youtube.com/watch?v=Bq_xYSOZrgU • J.B. Soll, K.L. Milkman, and J.W. Payne. “Outsmart your own biases.” Harvard Business Review. (2015): https://hbr.org/2015/05/outsmart-your-own-biases • D. Stirling. “Games As Educational Tools: Teaching Skills, Transforming Thoughts.” Infospace. (2013): https://ischool.syr.edu/infospace/2013/06/27/games-as-educational-tools-teaching-skills-transforming-thoughts/ • O.Svenson. “Are we all less risky and more skillful than our fellow drivers?” Acta Psychologica. (1981): https://www.sciencedirect.com/science/article/pii/0001691881900056?via%3Dihub • H. Turnball. “The Affinity Bias Conundrum: The Illusion of Inclusion Part III.” Diversity Journal. (2014): http://www.diversityjournal.com/13763-affinity-bias-conundrum-illusion-inclusion-part-iii/ • “Can You Solve This?.” Veritasium – YouTube.com. (2014): https://www.youtube.com/watch?v=vKA4w2O61Xo 0

- 85. 85 © 2018 Express Scripts. All Rights Reserved. These resources were not quoted or directly used for our presentation, but present ideas and examples that influenced our thinking and offer valuable, interesting insights. • M. Hartmann. “Unpacking the biases that shape our beliefs.” TEDxStJohns. (2015): https://www.youtube.com/watch?v=dU7Mhne4CzU • N.A. Heflick. “We Are Blind to Our Own Biases.” Psychology Today. (2011): https://www.psychologytoday.com/blog/the-big- questions/201102/we-are-blind-our-own-biases • C. Jager. “24 Cognitive Biases You Need To Stop Making [Infographic]” Lifehacker. (2018): https://www.lifehacker.com.au/2018/03/find-out-which-cognitive-biases-alter-your-perspective/ • T. Laurinavicius. “Cognitive Biases You Need to Master to Design Better Websites.” (2018): https://webdesign.tutsplus.com/articles/cognitive-biases-you-need-to-master-to-design-better-websites--cms-30742 • C. Ratcliff. “12 ways to fight bias in your team” Synopsys of #uxchat on Twitter, hosted by K. Bachmann. (2018): http://whatusersdo.com/blog/how-to-fight-bias-in-your-organisation-or-team/ • Mind Tools. “Avoiding Psychological Bias in Decision Making: How to Make Objective Decisions.” (2018): https://www.mindtools.com/pages/article/avoiding-psychological-bias.htm • M. Simmons. “Studies Show That People Who Have High ‘Integrative Complexity’ Are More Likely To Be Successful.” (2018): https://medium.com/the-mission/studies-show-that-people-who-have-high-integrative-complexity-are-more-likely-to-be- successful-443480e8930c Additional resources 0

- 86. 86 © 2018 Express Scripts. All Rights Reserved. ADDITIONAL QUIZ REVIEW

- 87. 87 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn Statistically (2) is most likely. (3) Indicates a Conjunction Bias. The combination of two conditions being considered more likely than one condition.

- 88. 88 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn “Yes” may indicate the Pollyanna Principle. Framing Bias may show up in the viewpoint “look at the bright side...”

- 89. 89 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn “Yes” may indicate the Bandwagon Effect or simply Wishful Thinking.

- 90. 90 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn “Yes” may indicate the Halo Effect or a Clustering Illusion.

- 91. 91 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn “Yes” indicates the Sunk Cost Fallacy.

- 92. 92 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn An answer of “Yes” indicates a Clustering Illusion or the Gambler’s Fallacy.

- 93. 93 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn If “good chance” means more than 50-50, then “Yes” indicates a Clustering Illusion or the Gambler’s Fallacy. Assumes 50% independent probability of red vs green!

- 94. 94 © 2018 Express Scripts. All Rights Reserved. Revisit the Quiz What we Learn If more people answered (1) than (2) it indicates Illusory Superiority. 93% of drivers claim to be better than average! (Svenson)

Editor's Notes

- We will pull up while people are filtering in. They can start playing when they are seated. USE your real name if you like – or USE a TEAM name or a CODE name – Just have fun with it – there aren’t really any RIGHT answers… This is like ‘Whose Line is it Anyway” where the points don’t matter! (But the real world implications do!)

- When time to start, we will shut quiz down and do intro.

- Hello and welcome to our talk! This is Check(mate) your bias. A games driven approach to having conversations with your team about cognitive bias!

- Before we jump in, a little bit about us. Hi I’m Joe Johnson, a Lead UX Engineer at Express Scripts. My background is a long career of software development and analytics, and in the past seven years has been more focused on machine learning, UX research and how to build data driven culture in an organization. The desire to foster data driven culture is what has brought me here today with Caron. Joe’s inspiration: My inspiration is that, as we see more machine and algorithmic decisions being made, sometimes we see unacceptable ERRORS in those algorithmic decisions! One takeaway of this is that it asks us to look more closely at WHO WE ARE as humans – what HUMAN errors are WE making when we TRAIN machines? One source of those human errors is cognitive bias. And there’s no Easy Button to turn it off! So I started exploring GAMES as a WAY for us in UX to engage this problem openly, and with a learning mindset. I’m Caron Garstka, and I am a Senior UX Researcher at Express Scripts. Joe and I work together on the same team and had coinciding fantasies of doing a talk on cognitive bias about how to have these conversations with other technologists on the team. That is part of the reason why we are here today! Caron’s inspiration: My inspiration was my experience at UXPA last year. Quite a few talks about cognitive bias that spoke to the need to address it in our work and provide education across our teams. But I was left wondering how we could effectively have these conversations with cross functional teams that have people with varying skillsets. Without being confrontational or combative. And also being engaging.

- This is what we have planned for our talk. (walk through agenda)

- We are all human. As a part of being human we have evolved mechanisms to help aid our decision making. Our brains have too much information coming in and because of that our brains take shortcuts.

- These shortcuts are called cognitive biases and they effect everyone. If you have a brain, you’re automatically biased.

- We rely on them to make quick decisions. Individuals create their own “subjective social reality” from their perception of the input. Biases are not inherently good or bad. They act as cognitive tool. They are all of the tiny assumptions we make as we navigate the world because we’re bombarded with too much information – our brains need a way to sort through the information and make sense of it. https://medium.com/the-mission/studies-show-that-people-who-have-high-integrative-complexity-are-more-likely-to-be-successful-443480e8930c Many biases were instrumental to our survival in an ancient world, but can lead to irrational decisions in the modern world. With the explosion in the availability of ever more data about user experience we have more power to "know" things we didn't know before. But our cognitive biases can get in the way.

- The reality is that our perceptions are riddled with unchecked biases. That’s generally a good and necessary thing, because biases are cognitive shortcuts that allow us to make sense of way more sensory data than we could otherwise handle. But these biases can make us less effectual in our roles as user experience professionals - researchers, designers, data scientists, and strategists. These same biases that helped eons ago, can now get in the way. As you can see, there are well over 100 biases that color the way we see the world. When there is too much information, when there is not enough meaning, when we need to make a split-second decision, or when we are trying to remember, at least one of these could be coming into play. https://betterhumans.coach.me/cognitive-bias-cheat-sheet-55a472476b18

- So because we are human, and humans have bias, and bias colors our world…

- Bias also effects our work.

- In every step of research, even with the most rigorous application of the scientific method, there is one source of variability we might never see - ourselves. Our cognitive biases can influence our research and what we come to "know" in many ways. In every step of design, even with unbiased research supporting it, cognitive bias is present. We’re all human. Rhiannon Barrington presented a great session with that title just yesterday in this same room.

- To be better at what we do, we need to talk about our bias. We can hope to avoid the most detrimental effects of bias by becoming aware of how biases affect our decision-making and ‘science’. Melanie Funchess "What has been done can be undone…. Our brains have tremendous capacity for growth and change." Call yourself on your actions. Takes being extremely self-aware.

- BUT there’s a catch! “BIAS IS HARD TO TALK ABOUT”. It is hard to talk about because there is a strong emotional component – these cognitive biases can make us feel faulty, and dumb! Seeing them and recognizing them in ourselves involves personal vulnerability. Especially true when business outcomes are on the line – we may be entrusted with decisions involving a lot of dollars or the future of our organizations. So this learning to CHECK your BIAS - these conversations - need to be handled in a non-threatening way so that people aren’t alienated, but rather drawn into the topic. We want our teams to know and to care about cognitive biases, not feel defensive or threatened by them.

- So the question then becomes: HOW do we make learning about bias more approachable and non-threatening?

- So we’ve been exploring GAMES as an approach to educating teams about bias.

- Why games though? Games function as an ENGAGING LEARNING TOOL that makes uncomfortable topics easier to approach. (Cite literature- pedagogy for teaching) Games allow us to let our walls down and have OPEN CONVERSATIONS in a NON-THREATENING context and without alienating people. We can UNCOVER our BIASES WITHOUT BEING PUT ON THE SPOT regarding actual work product. Games are EXPERIENTIAL. Why just TELL when you can SHOW how something works? Experiential Learning is effective for self reflection. (citations). Games ask you not just to experience something, but also to GET INVESTED in the activity, to have successes and failures, and measurable outcomes. This adds FUEL to the fire of experiential learning. So we've been exploring this work with games, and brought some to share with you today…

- So the GOAL is NOT to be an expert on cognitive bias. The GOAL is to BUILD OUR AWARENESS, as the first step toward change. And maybe have fun doing it…

- So let’s PLAY a GAME. I’ll kick off this first one, and then after this we’ll hear some more from Caron.

- I’m going to start of by showing you three numbers… Now, if you’ve played this game before then please play SILENTLY in order to let the MAGIC happen for everyone else, ok? – THANKS! I'm going to show you three numbers – a three number sequence -

- And these three numbers will follow a RULE I have in my HEAD

- Your goal in the game will be to GUESS the RULE I have in my head. BUT – you won’t need to GUESS the rule RIGHT AWAY! –

- INSTEAD of guessing the rule right away, I want you to give ME THREE numbers that you think FIT the rule…

- Then I’ll tell you, YES or NO, whether YOUR three numbers FIT the rule… then, WHEN you’re ready, you can GUESS the RULE…

- Ready to get started?...

- The numbers are: 2, 4, 8 Don’t say your rule out loud yet, but think about it, and write it down if you like. Now again, the way you’ll get information ABOUT the rule is to propose THREE NUMBERS back to me, and then I’ll tell YOU whether they FIT the rule. NOW - - I’m going to ask that SOMEONE WHO HASN’T PLAYED the game before to do this…

- What sequence of three numbers do you propose? (Take proposals, say YES or NO, and CONTINUE TO PLAY, taking additional proposals - offer clues if needed, to get to the answer…) Proceed by writing down the rule on a piece of paper so that participants can confirm at the end. The rule is " three increasing numbers." Then provide participants with the initial three numbers: 2, 4, 6. Ask a volunteer to give you three numbers and write them down along with yes/no it fits with the rule. Then move on to another participant. After 10 participants, have the audience try to guess the rule by writing it on a piece of paper, passing them up, and then tally the responses. Clearly, who is right or wrong isn't the point of the activity. Amazingly, you're going to get a bunch of 10,12,14; 20, 22, 24; etc. Participants will tend to believe it's just increasing even numbers. You'll probably end up with someone who wises up and says 3, 5, 7 (which does indeed fit the rule), and then it might transition off to 4, 8, 12; 5, 10, 15 (increasing multiples - which still do fit the rules). What's amazing is that someone will probably give a response that goes against the rule (3, 2, 1), and even after this is a no, people will still go back to even numbers or multiples to still be correct.

- (NOTE the NAME(s) of the Winner(s) – those who FIRST guess the RULE) OK – very interesting, that was fun! Let’s take a look at some cognitive biases that can come into play with this game… Amazingly, when you play this game you usually get a bunch of 10,12,14; 20, 22, 24; etc. Participants will tend to believe it's just increasing even numbers. You'll probably end up with someone who wises up and says 3, 5, 7 (which does indeed fit the rule), and then it might transition off to 4, 8, 12; 5, 10, 15 (increasing multiples - which still do fit the rules). What's amazing is that someone will probably give a response that goes against the rule (3, 2, 1), and even after this is a no, people will still go back to even numbers or multiples to still be correct. People often assume it's even numbers and just verified their own suspicions. When someone gave something that wasn't even numbers or something that they thought, they often just ended up going back to what they knew worked. This is even despite the best way of knowing if you're right is not by gaining more support for what you believe, but instead by ruling out all of the instances in which you could be wrong (these are not the same concepts). To be 100% certain that the rule is increasing even numbers, it's not logical to just give a bunch of increasing even numbers every time and be correct. Instead, give instances of non-increasing and non-even numbers. If you find out that 3, 5, 7 works, clearly being even numbered has nothing to do with it, but increasing numbers may still be a part of it. So, next provide decreasing numbers, or numbers where the gap in between isn't equal across the three numbers (4, 60, 719) or something else that's different. Multiple biases can come into play: Anchoring (attachment to your first hypothesis) Confirmation Bias (seeking only to confirm your first hypothesis and not to refute it) Complexity Bias (belief that “complex solutions are better than simple ones”) so strong that we are blind to the simpler solutions Conjunction Fallacy (belief that a detailed condition is more probable than a general one) May also have a touch of bandwagon effect where you may go along with other people’s answers regardless of what you really think. Video 2 4 8: https://www.youtube.com/watch?time_continue=2&v=vKA4w2O61Xo

- Anchoring Bias is the tendency to rely too heavily on an initial piece of information. Anchoring occurs when, during decision making, an individual relies on an initial piece of information to make subsequent judgments. You may NOW notice that you gave a lot of weight to your FIRST hypothesis about the RULE, and perhaps felt anchored to that conviction about your first hypothesis. Also, since I gave an initial example of 2,4,8 which DOES indeed fit some more complex rules, you may have continued to rely on it more than OTHER subsequent observations of three number sequences that were ALSO found to FIT the RULE – or NOT fit the rule - as we continued playing…

- Complexity Bias is the tendency to believe that complex solutions are BETTER than simple ones. Engineers, you may be able to relate to this one. Engineers can be prone to over-engineer problems. This can be a challenge for Machine Leaning practitioners. Especially now with so much computing power and fancy tools to easily build complex models. This may be a bias we’re taught from a young age, since in school exams the phrase “choose the BEST answer” often means choose the most SPECIFIC answer, and penalizes students for choosing a more GENERAL answer that is also CORRECT. This teaches us that the quote-“BEST” answer is indeed the most complex one.

- This game also illustrates Confirmation Bias – the tendency to find or use evidence in a way that confirms preexisting beliefs. Once you have established a belief, you tend to favor that belief. It can become almost impossible to talk our brains out of some beliefs! So during this game, you may have wanted to PROPOSE only data that would CONFIRM your hypothesis. And you might have avoided proposing numbers that would REFUTE your hypothesis, even though that could give you VALUABLE information to win the game. You might avoided stepping back even further to consider OTHER hypotheses altogether… SO – let’s take a quick look at TAKEAWAYS from this game…

- 1-ASK “What else?” - Move past the ANCHOR of your first idea or first impression to avoid Anchoring Bias. If you’re familiar with the Agile technique of asking Five Whys, that could be useful! 2-Keep It Simple, Silly. - ASK what SIMPLER explanation exists? Avoid Complexity Bias. 3-Point and counter point – Actively SEEK out COUNTER-arguments and OPPOSING evidence in order to avoid Confirmation Bias. I’m going to turn it over to Caron now, for another game… BUT FIRST – It wouldn’t be a proper GAME if there wasn’t a PRIZE, RIGHT? (hold up a brain…) (WINNER Name(s)), your prize is: a REALISTIC, individually wrapped, fresh SQUISHY brain, approximately the SIZE of a SQUIRREL brain. Congratulations! From now on, we’ll simply refer to these formidable prizes as SQUIRREL BRAINS! OK, Caron – you ready for the next GAME?...

- This game was adapted from a game created by Sandra Fowler. In this exercise, participants stick badges, in a variety of shapes, colors, and sizes, somewhere between their waist and neck. Participants are then instructed to form groups without talking. Once formed, the participants are instructed to break up and form into new groups. This is repeated.

- Usually this is done in person, but because of the space restrictions, we will use this visualization to guide us. Let’s say all of these people are standing in a room.

- In this exercise, participants are wearing badges somewhere between their neck and waist.

- Each badge can vary in shape, color, and size.

- Each person is wearing the badge when they are brought together in a group.

- Ordinarily, the participants would form groups without talking. Today, your will be the one forming groups. So go ahead and form groups with what you see here. I will give you a minute to complete this task.

- So now you will create another set of groups that is different from the groups you just put together. I will give you another minute to do that.

- So we are going to form one more set of groups that are different than the previous two you’ve created.

- For this, think about where you would put yourself. Who would you want to be in a group with? How would you determine which group you would be in?

- Let’s discuss this: How did you form the groups? First? Second? Third? Participants will normally form groups based on shapes, colors, or sizes. Rarely do the participants look beyond the badges, and even less, do they intentionally form diverse groups in which many shapes, colors, and sizes are represented.

- The framing effect is when we react unknowingly to things the way they're conveyed to us. Consider the simple example of a pessimist and an optimist. A glass of water which is either half-full or half-empty: both are equivalent truths. However, when portrayed in a negative frame, you think that the glass is half-empty. If portrayed in a positive frame, you see the glass as half-full. Such 'frames' can be used to create marketing gimmicks by advertisers to trick consumers into buying their products. Framing effect can effect how we balance certain factors. In the setup of this game, we framed it so you would ultimately pay attention to the fact that each participant was wearing a badge. We were framing the game to center around the badges’ characteristics, but really how you made groups was open to your interpretation. When this game is done in a co-located group, people can organize by what they are wearing that day, if they have glasses, and so on. If they have deep knowledge of the people in the group, further grouping can be done based on that. In the face of uncertainty people latch on to information that helps give shape, or a frame, to work within. This may even happen when the information before them isn’t necessarily relevant, but it’s used to frame their way of thinking.

- The Availability Heuristic is the tendency to rely on immediate examples that come to mind when evaluating a decision. These are items you may be holding in working memory so you are most readily able to draw on them. It could be a memory that is recent, frequent, extreme, vivid or negative. The Framing Effect and the Availability Heuristic can be closely related. How you frame something could effect what is recalled. This also means we see what we want based on information that isn’t necessarily relevant – it is just more available

- Regardless of how people tend to group themselves, the other thing that usually happens is affinity bias. This is where we gravitate toward people like ourselves in appearance, beliefs and background. We may even avoid or dislike people who aren’t like us. It is easy to socialize and spend time with others who are not different. It requires more effort to bridge differences when diversity is present. This can show up in how we interact with people in the workplace, who we gravitate toward on our team, even what persona we pick during design thinking exercises.

- Lift the veil and see beyond the FRAMING of a situation to avoid the Framing Effect. As yourself how your decision would change if framed in a different way. ASK “What am I missing?” – Don’t rely on gut impulse or snap conclusions based on what comes to mind. It can lead you to incorrect conclusions. Don’t be certain that just because you recall something, that it must be important. We have a tendency to weigh judgments toward the latest and greatest piece of information. But this is often only one piece of a bigger whole. Expect that there is more information to be found beyond the information you draw on. This way of thinking can help you avoid the Availability Heuristic. Be relentlessly curious. Ask for stats, ask for background info, ask for alternatives. Seek healthy dissent. Seek debate. Seek diversity and differing views and approaches to avoid Affinity Bias.

- This was part 3 of an experiment done by three researchers Michael Norton, Dan Mochon, and Dan Ariely.

- I’ve already recruited a participant to help us out with this. They have diligently worked to complete a lego set. Ask: How do you feel about your work? Do you feel you are done?

- So now I need another volunteer. (select volunteer) I am handing you, Participant 2, an IDENTICAL lego set. This set has already been finished. Take a look at the set, inspect it. Is it identical?

- Now let’s go over what we will be doing here today.

- The highest price is called a reservation price. This price will be the maximum you are willing to pay to secure the item. You will come up with a price for each set (your’s and your partner’s)

- This price has been selected from an unknown distribution.

- Again, you are naming the highest price you are willing to pay (your reservation price). Write it down.

- Again, you are naming the highest price you are willing to pay (your reservation price). Write it down.

- What did they bid for each set? Was there an amount difference between what the ‘build’ participant’s bid on the item they built v. their partner’s item? What about the ‘prebuilt’ participant’s bids?

- Open envelop and declare winner.

- Open envelop and declare winner. Ask how the participants did. Any winners?

- (Have participants talk through their bidding strategy) Generally how this game plays out is that participants usually bid more for the conditions they were assigned. However, the ‘build’ participants universally applied more value to their own sets versus those of their partners. And the build condition definitely produced much larger values than the prebuilt condition. Some of what might be happening in this experiment is called the endowment effect – where people are more likely to retain an object they own than acquire that same object when they do not own it. But that doesn’t explain why the people who built their lego set tended to place an even higher value than those who were given the lego set prebuilt. For this, we turn to the IKEA effect.

- If someone has an idea, and has worked on it, they are more likely to cling to the notion that it therefore must be good.

- The idea that someone would pay more for something that they had a hand in creating could also be explained by the sunk cost fallacy. Individuals commit the sunk cost fallacy when they continue a behavior or endeavor as a result of previously invested resources (time, money or effort) (Arkes & Blumer, 1985) This bias can go hand in hand with the IKEA effect since we are attached to things we have put effort into or created. We think that because we have “sunk” time and resources into something, we have to continue until we see it through to completion. In the case of the legos, time was spent building it, therefore it may be harder to let go. There is a willingness to continue to toss resources at it even if it would be more cost effective to let it go.

- If you build it… They might NOT come! Don’t get stuck with an idea just because you’ve built it. Avoid the IKEA Effect. You may overvalue the work you are doing than what it may actually is worth. Let it go – Don’t be FROZEN, holding onto something just because of an investment in it. Be willing to try something Elsa, and avoid the Sunk Cost Fallacy. These biases can be observed with designers becoming too attached to something they have created. You can fall prey to these biases when a portfolio of project work goes south or the market desires shift, but you persist in carrying out the project to completion even though it would cost you less overall by cutting and running. Because we perceive those costs as sunk, we have a desired to make good on those costs.

- We’ll walk through a handful of questions from the QUIZ GAME offered at the START of our session… There really are no WRONG answers. You are just showing your humanity. The goal is to LEARN, to CHECK our bias, and to IMPROVE. When we LEARN and IMPROVE, of course our WHOLE TEAM WINS! : -)

- Answer (1) or (3) could indicate the Sunk Cost Fallacy at work. OF COURSE, the real quality of the decision depends on your REASONS for keeping the design…

- This scenario is an all-time CLASSIC illustration of Confirmation Bias… Affinity Bias could also be in play because of the comfort with what is familiar and agreeable.

- “Yes” can indicate the Sunk Cost Fallacy. However, answer number 1 “I’d take a nap” could ALSO be a rational answer, where you find an alternative use of that time, rather then surrendering it completely to a Sunk Cost Fallacy.

- We saw also saw Confirmation Bias in play during the 2-4-8 game…

- IF we have the QUIZ LEADERBOARD available – CHOOSE the TOP N (<=10) to give SQUIRREL BRAIN prizes!...)

- Having more automation in our lives – more AI and Machine Learning making decisions – reminds us how important it is to have the HUMAN touch – the importance of EMPATHY in the work we do. We also want to be DATA-DRIVEN and make evidence-based decisions, and to NOT be MISGUIDED by our cognitive biases… Can we foster a CULTURE in our organizations that does BOTH? In our work with games we SEE this type of GAME PLAY being at the INTERSECTION of these concerns. It contrasts our HUMANITY and RATIONALITY side by side. We hope this has been useful, and that it might give you some tools you can take back to your teams. I think Caron is going to wrap up now with some ideas about HOW this might look for your teams...

- We didn’t invent games, most came from psych experiments. Feel empowered to take the games and play them with your teams. Driving testing. Check your bias and actually test things. If you are trying to build a testing culture and convince leadership that testing is needed, useful here too.

- * It is a continuous effort of learning and relearning * Change won’t happen overnight – it requires patience and acceptance that we won’t get it right the first time * It is easy to see bias in others rather than ourselves – keep working at it and be kind * Game on! -

- Hope it was fun and informative! Again, the ”right” answers in this particular game were not always clear, and seem quite subject to interpretation. Our GOAL here is to have fun and gain AWARENESS more than to get “right” answers. Have a good afternoon. Thanks for joining us.