How to deliver High Performance OpenStack Cloud: Christoph Dwertmann, Vault Systems

Securing Openstack in Line with the Government ISM and PSPF controls and how to deliver High Performance OpenStack Cloud to address Government Legacy Systems Audience: Intermediate/Advanced Topic: Security, Infrastructure, Performance Abstract: As the CTO of Vault Systems, Christoph will take us through the challenges of implementing ASD’s ISM controls within Vault’s OpenStack cloud to create a Protected Certified OpenStack Platform and give a technical account of some of the optimizations he has done around Ceph on NVMe Storage to deliver High Performance Storage. Speaker Bio: Christoph Dwertmann, Vault Systems Christoph is a full stack engineer with four years of experience in deploying and securing Openstack. Fully automated software deployment and self-healing microservice containers are amongst his current interests. As the CTO of Vault Systems he recently deployed the world’s first pure NVMe Ceph cluster into production. From his previous work in network research for the National Science Foundation (NSF) he gathered in-depth knowledge spanning software-defined networks across continents. OpenStack Australia Day Government - Canberra 2016 https://events.aptira.com/openstack-australia-day-canberra-2016/

Recommended

Recommended

More Related Content

What's hot

What's hot (20)

Viewers also liked

Viewers also liked (12)

Similar to How to deliver High Performance OpenStack Cloud: Christoph Dwertmann, Vault Systems

Similar to How to deliver High Performance OpenStack Cloud: Christoph Dwertmann, Vault Systems (20)

More from OpenStack

More from OpenStack (20)

Recently uploaded

Recently uploaded (20)

How to deliver High Performance OpenStack Cloud: Christoph Dwertmann, Vault Systems

- 1. Securing Openstack with ISM and delivering high-performance storage Christoph Dwertmann (CTO) OPENSTACK AUSTRALIA DAY 2016 1

- 2. ● Community Cloud for Australian federal, state and local government agencies and their partners ● Founded 5 years ago ● Runs customized version of Openstack on Ubuntu ● Completed IRAP assessment to UNCLASSIFIED in 2015 ● Listed on ASD’s Certified Cloud Services List (CCSL) ● Provide ISM-compliant cloud SOE to customers ● PROTECTED coming soon, SECRET in development ● Offices & Data Centre Presence in Canberra and Sydney Who we are 2

- 3. ● High density Intel XEON compute platform with converged storage ● 512GB RAM per host ● Mellanox 100Gbps networking ● Pure NVMe Ceph cluster as main storage backend ● Cinder/LVM flash storage for best IOPS performance ● Multi-region Swift cluster on spinning disks (10TB/disk) with 3x replication across different data centres ● Secure Internet Gateway with multiple redundant ISP connections (ICON connectivity coming soon) Our technology 3

- 4. ● Issued annually by the Australian Signals Directorate (ASD) ● Objective is to assist Australian government agencies in applying a risk–based approach to protecting their information and systems ● 938 controls (2016) designed to mitigate the most likely threats to Australian government agencies ASD Information Security Manual 4

- 5. ISM coverage information security documentation personnel security cyber security incidents media security roles and responsibilities physical security information security monitoring communications security email security cryptography working off-site 5

- 6. ● Vault’s cloud must comply with all ISM controls to pass IRAP assessment (completed in 2015) ● Vault must ensure compliance is maintained and new controls are applied ● We offer customers a compliant environment, greatly reducing their own compliance requirements ● The customer is responsible for ensuring compliance when modifying Vault’s SOE The ISM and Vault 6

- 7. ● ISM slowly adopts some cloud-friendly controls ○ e.g. Controls 1460-1463: Functional separation between server-side computing environments ● Some controls are difficult: e.g. email security ○ May be easier to not use email at all ● Evaluated Product List -> a lot of outdated HW/SW ● Openstack from source allows us to meet ISM requirements - no distro Openstack is secure enough ISM and Cloud 7

- 8. Some controls may not apply: ISM and Cloud 8

- 9. Some controls are not cloud-friendly: ISM and Cloud 9

- 10. ● Cinder/LVM directly maps block storage to customer VM ● Cinder supports block device sanitization: shred from the coreutils package overwrites LVM block device with random pattern (3x on Openstack) Example: Media Security cinder.conf # Method used to wipe old volumes (string value) volume_clear=shred 10

- 11. NO when sanitization fails and the data on the drive is classified Can we RMA a faulty drive? 11

- 12. Reclassifying disks through successful sanitization: Can we RMA a faulty drive? 12

- 13. Reclassifying drives through data at rest encryption: ● Self-encrypting disks: simply change encryption key (as long as drive is still accessible) ● dm-crypt: Linux-based disk encryption ● Swift object encryption (new feature in Openstack Newton) Still need to sanitize the disks! Can we RMA a faulty drive? 13

- 14. YES if the drive was reclassified to Unclassified through sanitization or encryption and sanitization & a formal administrative decision is made to release the unclassified media, or its waste, into the public domain Can we RMA a faulty drive? 14

- 15. ● A bit of work to implement ● Ongoing work to keep up with latest controls ● Not always cloud-friendly ● Some creative ideas required to solve tricky controls ● Discussions with IRAP assessor can help ● Best practise for IT security ● Agencies: Vault Systems did most of the work for you! ISM conclusion 15

- 16. CEPH 16

- 17. ● Free software storage platform ● In development since 2007 (Openstack: 2010) ● Implements Object, Block and File storage ● Runs on commodity hardware ● Fault-tolerant, self-healing, self-managing ● Block supports snapshots, striping, native integration with KVM and Linux ● 6 month release cycle What is Ceph? 17

- 19. OSD Data Flow 19

- 21. ● We like Open Source ● We don’t like to depend on a single vendor for support, RMA and upgrades ● Openstack integration second to none (cinder-volume, cinder-backup, nova, keystone, swift alternative) ● Mature, large online community, active development ● Versatile (Block, Object, Network FS) ● Distributed (no downtime on upgrades) ● Supports copy-on-write for fast instance creation Why are we using Ceph? 21

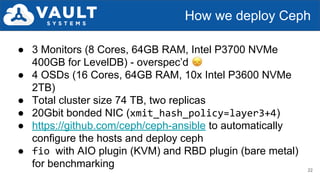

- 22. ● 3 Monitors (8 Cores, 64GB RAM, Intel P3700 NVMe 400GB for LevelDB) - overspec’d ● 4 OSDs (16 Cores, 64GB RAM, 10x Intel P3600 NVMe 2TB) ● Total cluster size 74 TB, two replicas ● 20Gbit bonded NIC (xmit_hash_policy=layer3+4) ● https://github.com/ceph/ceph-ansible to automatically configure the hosts and deploy ceph ● fio with AIO plugin (KVM) and RBD plugin (bare metal) for benchmarking How we deploy Ceph 22

- 23. ● Sequential R/W 1MB: saturate 20 Gbps port bandwidth on all four OSDs, total 7.5GB/s ● Random read 4K: >500K IOPS measured from bare metal nodes ○ Could be improved by running more than one OSD per disk ● Max 50K IOPS on a single KVM with jemalloc due to librbd memory allocation performance (35K with malloc) ● Latency 1ms, Cinder/LVM beats it (0.6ms) Performance observations 23

- 24. ● Patch ceph-ansible to support more than one OSD per disk (2 for SSD, 4 for NVMe) ● Move to converged compute/storage architecture ● Use RDMA in latest Ceph release to improve latency / IOPS ● Deploy Ceph Bluestore when it’s ready for production Future work on Ceph 24

- 25. ● Slide 8: http://www.theradiohistorian.org/ ● Slide 19: Jian Zhang (Intel) ● Slide 20: Sage Weil (Red Hat) ● Slide 24: http://cryptid-creations.deviantart.com/art/Daily-Paint-986- Octopus-vs-Cookie-Jar-OA-551061037 Credits 25