Report

Share

Recommended

More Related Content

Similar to Fcv learn fergus

Similar to Fcv learn fergus (20)

A Simple Introduction to Neural Information Retrieval

A Simple Introduction to Neural Information Retrieval

[IROS2017] Online Spatial Concept and Lexical Acquisition with Simultaneous L...![[IROS2017] Online Spatial Concept and Lexical Acquisition with Simultaneous L...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[IROS2017] Online Spatial Concept and Lexical Acquisition with Simultaneous L...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[IROS2017] Online Spatial Concept and Lexical Acquisition with Simultaneous L...

Cvpr2007 object category recognition p2 - part based models

Cvpr2007 object category recognition p2 - part based models

Explanations in Dialogue Systems through Uncertain RDF Knowledge Bases

Explanations in Dialogue Systems through Uncertain RDF Knowledge Bases

Semantic Web: From Representations to Applications

Semantic Web: From Representations to Applications

Bringing It All Together: Mapping Continuing Resources Vocabularies for Linke...

Bringing It All Together: Mapping Continuing Resources Vocabularies for Linke...

AR+S The Role Of Abstraction In Human Computer Interaction

AR+S The Role Of Abstraction In Human Computer Interaction

Sparklis exploration et interrogation de points d'accès sparql par interactio...

Sparklis exploration et interrogation de points d'accès sparql par interactio...

Iccv2009 recognition and learning object categories p2 c02 - recognizing mu...

Iccv2009 recognition and learning object categories p2 c02 - recognizing mu...

dialogue act modeling for automatic tagging and recognition

dialogue act modeling for automatic tagging and recognition

More from zukun

More from zukun (20)

Brunelli 2008: template matching techniques in computer vision

Brunelli 2008: template matching techniques in computer vision

Advances in discrete energy minimisation for computer vision

Advances in discrete energy minimisation for computer vision

EM algorithm and its application in probabilistic latent semantic analysis

EM algorithm and its application in probabilistic latent semantic analysis

Iccv2011 learning spatiotemporal graphs of human activities

Iccv2011 learning spatiotemporal graphs of human activities

Recently uploaded

Enterprise Knowledge’s Urmi Majumder, Principal Data Architecture Consultant, and Fernando Aguilar Islas, Senior Data Science Consultant, presented "Driving Behavioral Change for Information Management through Data-Driven Green Strategy" on March 27, 2024 at Enterprise Data World (EDW) in Orlando, Florida.

In this presentation, Urmi and Fernando discussed a case study describing how the information management division in a large supply chain organization drove user behavior change through awareness of the carbon footprint of their duplicated and near-duplicated content, identified via advanced data analytics. Check out their presentation to gain valuable perspectives on utilizing data-driven strategies to influence positive behavioral shifts and support sustainability initiatives within your organization.

In this session, participants gained answers to the following questions:

- What is a Green Information Management (IM) Strategy, and why should you have one?

- How can Artificial Intelligence (AI) and Machine Learning (ML) support your Green IM Strategy through content deduplication?

- How can an organization use insights into their data to influence employee behavior for IM?

- How can you reap additional benefits from content reduction that go beyond Green IM?

Driving Behavioral Change for Information Management through Data-Driven Gree...

Driving Behavioral Change for Information Management through Data-Driven Gree...Enterprise Knowledge

Recently uploaded (20)

Exploring the Future Potential of AI-Enabled Smartphone Processors

Exploring the Future Potential of AI-Enabled Smartphone Processors

AWS Community Day CPH - Three problems of Terraform

AWS Community Day CPH - Three problems of Terraform

Boost PC performance: How more available memory can improve productivity

Boost PC performance: How more available memory can improve productivity

Advantages of Hiring UIUX Design Service Providers for Your Business

Advantages of Hiring UIUX Design Service Providers for Your Business

HTML Injection Attacks: Impact and Mitigation Strategies

HTML Injection Attacks: Impact and Mitigation Strategies

The 7 Things I Know About Cyber Security After 25 Years | April 2024

The 7 Things I Know About Cyber Security After 25 Years | April 2024

Tata AIG General Insurance Company - Insurer Innovation Award 2024

Tata AIG General Insurance Company - Insurer Innovation Award 2024

Apidays New York 2024 - The value of a flexible API Management solution for O...

Apidays New York 2024 - The value of a flexible API Management solution for O...

ProductAnonymous-April2024-WinProductDiscovery-MelissaKlemke

ProductAnonymous-April2024-WinProductDiscovery-MelissaKlemke

Driving Behavioral Change for Information Management through Data-Driven Gree...

Driving Behavioral Change for Information Management through Data-Driven Gree...

Bajaj Allianz Life Insurance Company - Insurer Innovation Award 2024

Bajaj Allianz Life Insurance Company - Insurer Innovation Award 2024

Tech Trends Report 2024 Future Today Institute.pdf

Tech Trends Report 2024 Future Today Institute.pdf

Fcv learn fergus

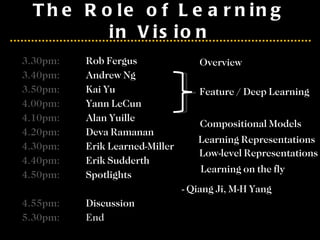

- 1. The Role of Learning in Vision 3.30pm: Rob Fergus 3.40pm: Andrew Ng 3.50pm: Kai Yu 4.00pm: Yann LeCun 4.10pm: Alan Yuille 4.20pm: Deva Ramanan 4.30pm: Erik Learned-Miller 4.40pm: Erik Sudderth 4.50pm: Spotlights - Qiang Ji, M-H Yang 4.55pm: Discussion 5.30pm: End Feature / Deep Learning Compositional Models Learning Representations Overview Low-level Representations Learning on the fly

- 2. An Overview of Hierarchical Feature Learning and Relations to Other Models Rob Fergus Dept. of Computer Science, Courant Institute, New York University

- 6. Single Layer Architecture Filter Normalize Pool Input: Image Pixels / Features Output: Features / Classifier Details in the boxes matter (especially in a hierarchy) Links to neuroscience

- 7. Example Feature Learning Architectures Pixels / Features Filter with Dictionary (patch/tiled/convolutional) Spatial/Feature (Sum or Max) Normalization between feature responses Features + Non-linearity Local Contrast Normalization (Subtractive / Divisive) (Group) Sparsity Max / Softmax

Editor's Notes

- Winder and Brown paper. Slightly smoothed view of things.

- Note pooling is across space, not across Gabor channel

- Non-maximal suppression across VW. Like an L-Inf normalization

- Note pooling is across space, not across Gabor channel